Head Analysis

1. Frontalization

To create the image of the frontal face view from the lateral one.

When a camera takes pictures of human faces from arbitrary views, it is possible for these pictures to be aligned with the specific position or orientation, especially, with the frontal view of them. It could be required for the face recognition system. The one of features of this project is for this, frontalization, and it guesses and creates the frontal faces from the input images which are lateral faces.

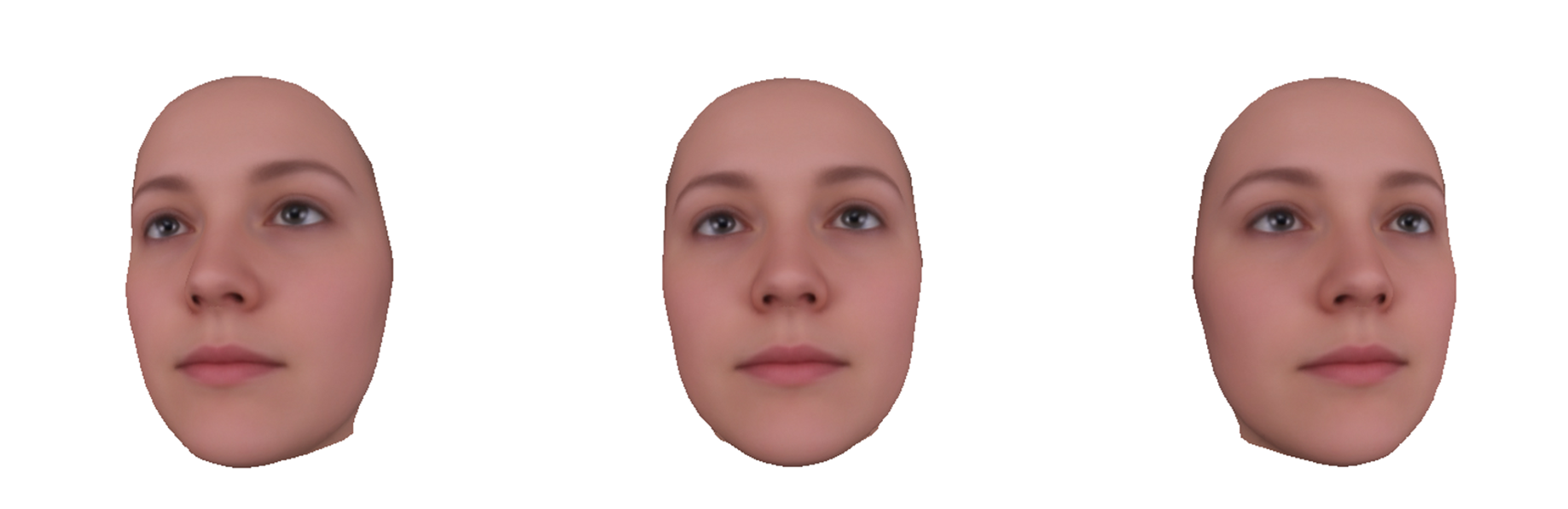

Rendering 3D Face Model

For creating the frontal face, preparing 3D face model object is a must. it allows us to predict and estimate the frontal faces from lateral ones which do not have any depth information. I found these object and texture files by googling, and it represents an average female face. I think that this model produces reasonable results compared with ones from other models I found.

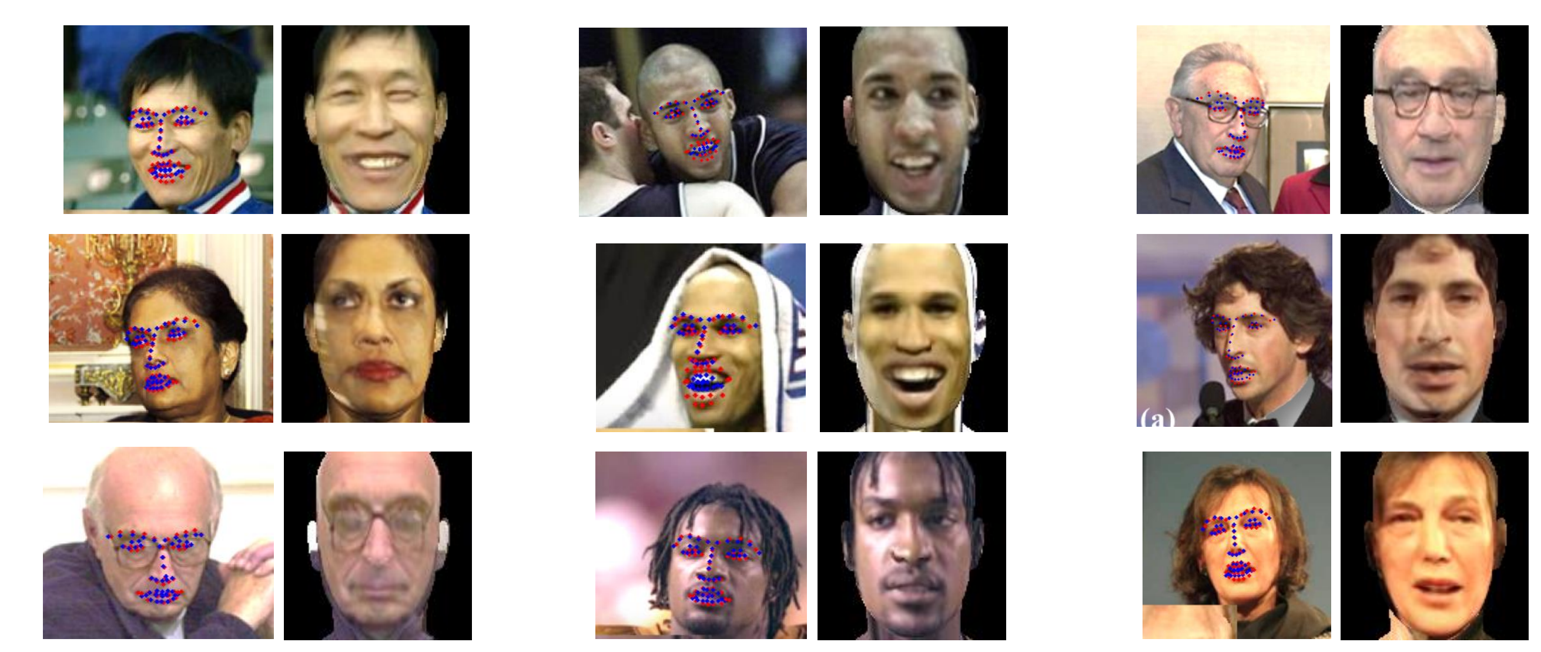

Landmark Alignment

With 3D face model prepared, we can align model and query images using a library such as Dlib. This alignment affects a lot the final result because the overall estimation is processed based on this information. Editing these landmarks, or adding, eliminating or modifying these, is critical to the final result. I chose landmarks as above without points of the jaw line because the jaw line is too sensitive each query image.

Frontalize [1]

With [1], the frontalization results as above. Although [1] supports their own codes, I could not find a C++ version. In addition, I needed to run this with unknown camera intrinsic parameters. Accordingly, I refactored and modified a little their codes for the frontalization feature of this head analysis project. The query samples above are manually captured from paper of [1] since I could not find these.

Limitation

As the yaw angle is greater, the quality of the result is lower. It seems to be reasonable because total amount of information is smaller as the yaw angle is greater. Moreover, when we see the lateral face of someone or appearance from behind extremely, it is hard to imagine his or her frontal face. It is actually usual to be surprised at the difference between what we saw and what we imagined.

2. Lateralization

To create the image of the lateral face view from the frontal one.

At this time, the process reverses what frontalization does. This term, lateralization, is the one I named after the way that the ‘frontalization’ represents, so it is not the official term. Basically, it works about the frontal face and creates the later face which is rotated by the Euler angle an user defines.

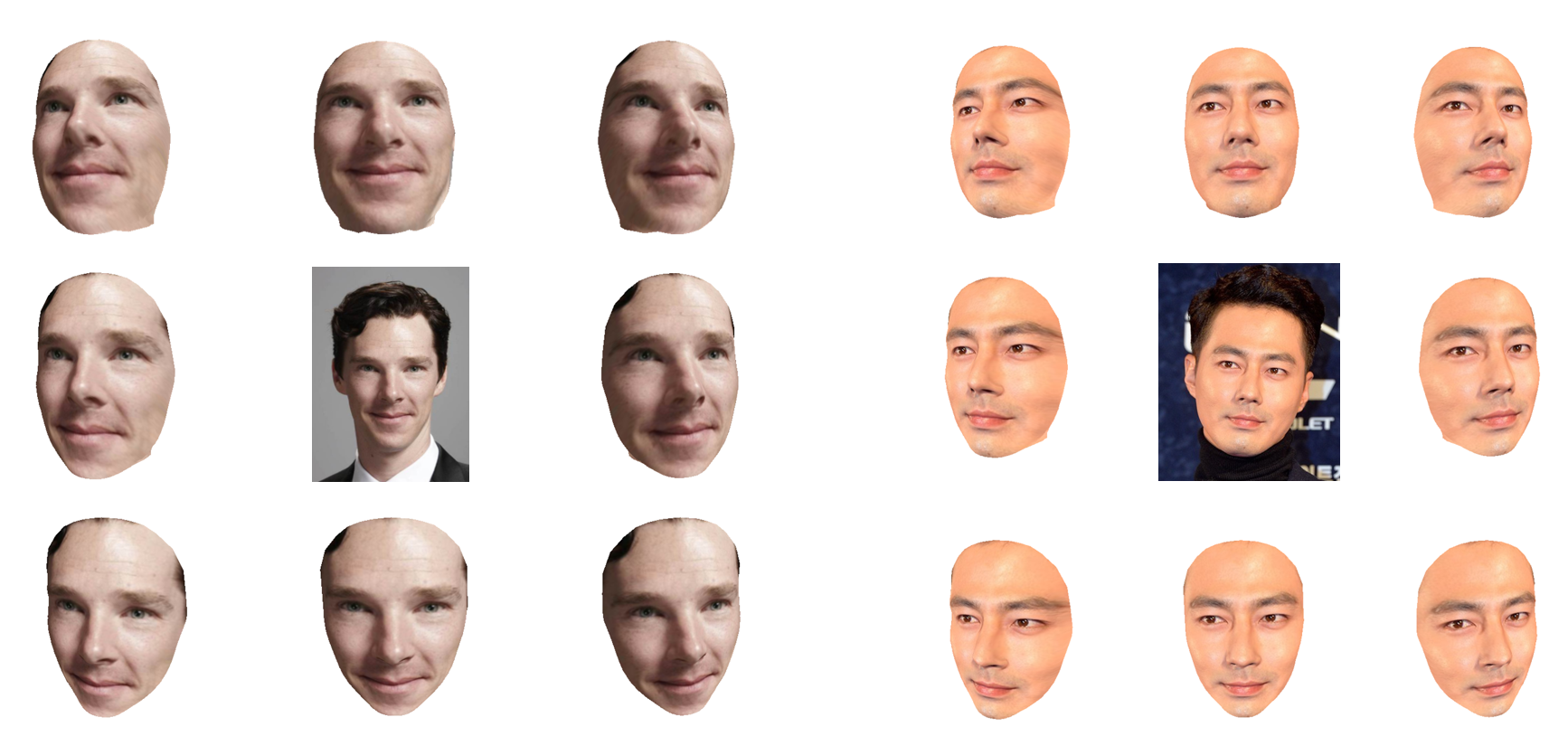

Camera Position Estimation

Like the way the frontalization does, the lateralization need the 3D face model and should align landmarks of the model and a query image which is frontal. With these information, the position of a virtual camera which takes the query image in the imagination should be estimated. There are many methods for this process, but many libraries support some functions for this such as solvePnP() in OpenCV.

Lateralize

After estimating the virtual camera position, it is possible to create the lateral face by an specific Euler angle. There are eight lateral faces which are created by diverse angles from each celebrity face in center as above. These have more better quality compared with the results of frontalization, and that is because the frontal query image has more information about face texture than the lateral one does.

3. Head Position(Orientation) Estimation

To estimate position whose head points to, or orientation.

If we create the frontal face from the lateral one and vice versa, of course we can figure out the position of that face. Simply put, the overall processing of frontalization and lateralization is finding out how much and which direction the query face should be rotated. There are many sources for doing this including [2].

Projection Matrix Decomposition

While the frontalization and lateralization need only projection matrix of a virtual camera, estimating head position requires decomposition of this matrix. In fact, it needs only rotation matrix, so we have to extract the rotation matrix from the projection matrix estimated. In [3], there is an excellent explanation about this.

References

[1] Effective Face Frontalization in Unconstrained Images

[2] Head Pose Estimation using OpenCV and Dlib

[3] John F. Hughes, Andries van Dam, Morgan McGuire, David F. Sklar, James D. Foley, Steven K. Feiner, and Kurt Akeley, Computer Graphics: Principles and Practice (3rd Edition)