Fire Detection

- 1. Candidate Extraction

- 2. Red Channel Based Detection

- 3. Covariance Based Detection

- 4. Flow Rate Based Detection

- References

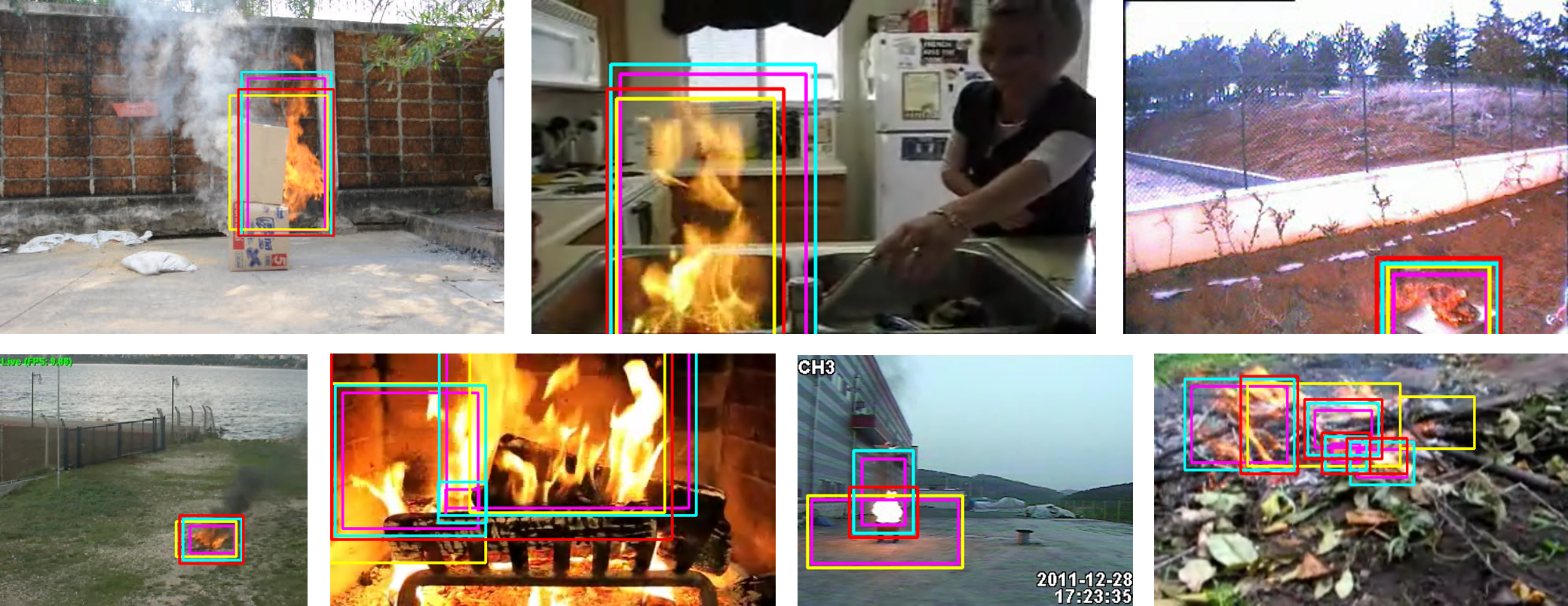

This fire detection project is organized to unite algorithms since there are so many existing rule-based algorithms that I wrote this keeping my mind on extension. So this project can add other algorithms or be modified. Most sample videos I tested are from [1] and [2].

1. Candidate Extraction

The fire candidates can be extracted at the very first because it is better to search selectively fire region than to the whole frame in terms of performance. Setting fire-like color range of RGB or color weight through machine learning is general, so I applied color weight algorithm from [3] as pre-processing. In addition, color-balancing such as [4] can be also added for nighttime environment.

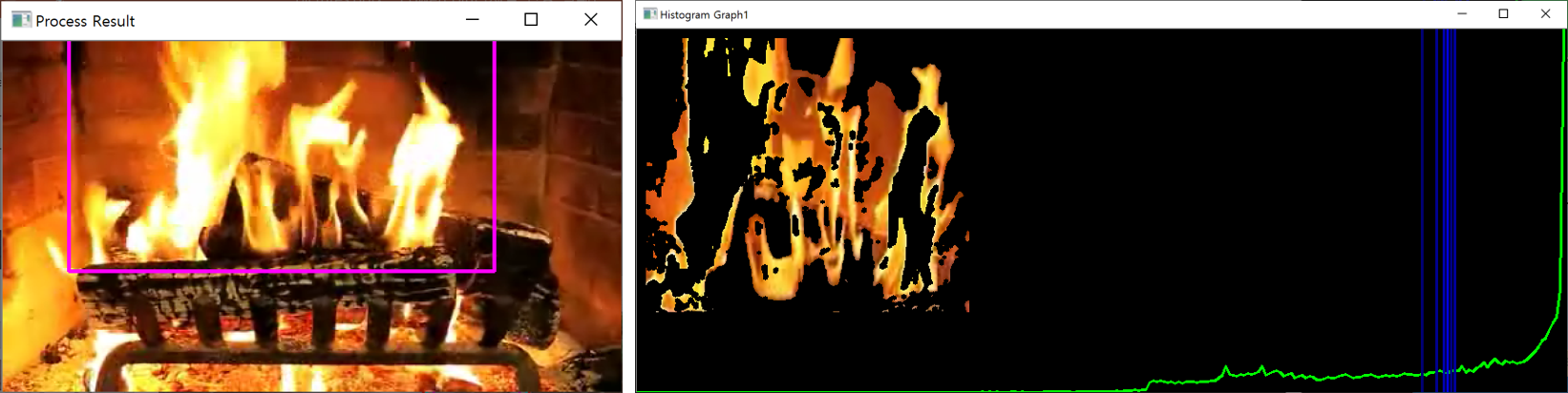

2. Red Channel Based Detection

Fire-like color seems to have the noticeable red component although not all do so. Moreover, a fire is generally flickering. Red channel based detection is based on these two features that the red component of a fire stands out and flickers. To be more specific, the red component of the candidate region is observed and the average of its histogram is calculated. If the region is actually a fire, the average will be perturbated near the high intensity. As above, the magenta box of the left image is the candidate region and the right image represents its histogram. The blue vertical bars are the averages of the histogram of each frame.

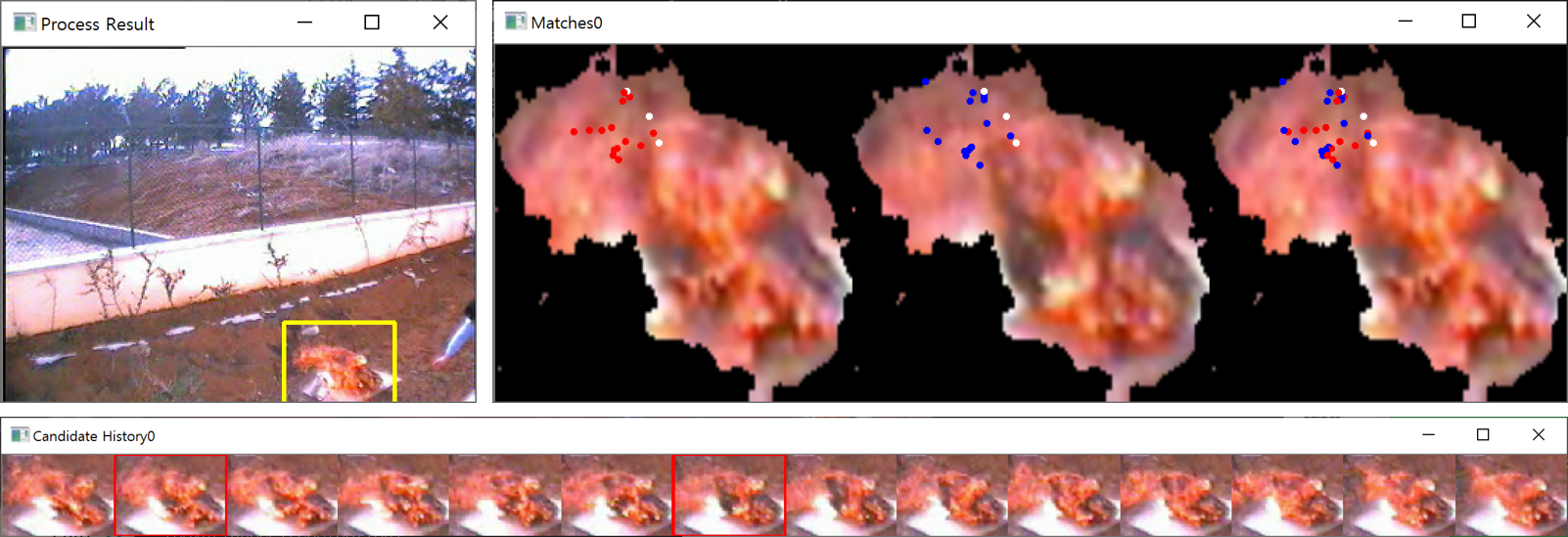

3. Covariance Based Detection

This algorithm uses the fire features that [5] defines. They defined the spatio-temporal features including RGB values and their derivatives. But I apply them with modification, which means that the most similar features are searched during some period using NCC while they apply the features to their SVM training system. Once the most similar features are given, the optical flow of them is calculated. If it is from the real fire, the flow would seem almost random. On the other hands, If it is a part of a static or moving object, the flow would be static or moving in one direction. That means it is possible to tell whether these features are from the real fire observing the delta set of the flow. Even if a object is moving, the delta set of the flow would be small because the delta is consistent in a specific direction. As above, the two red box images in the bottom image are most similiar among the others. As a result, the fire is detected as the yellow box in the top-left image because the flow delta of them is big enough.

However, this algorithm should distinguish between the real fire and the non-fire object which looks like a fire. Thanks to the features from [3], it can tell the non-fire obejct from the fact that its most similar features are close enough in the feature space as well. The two blue box images in the bottom image as above means that these are most similiar but not a fire detected.

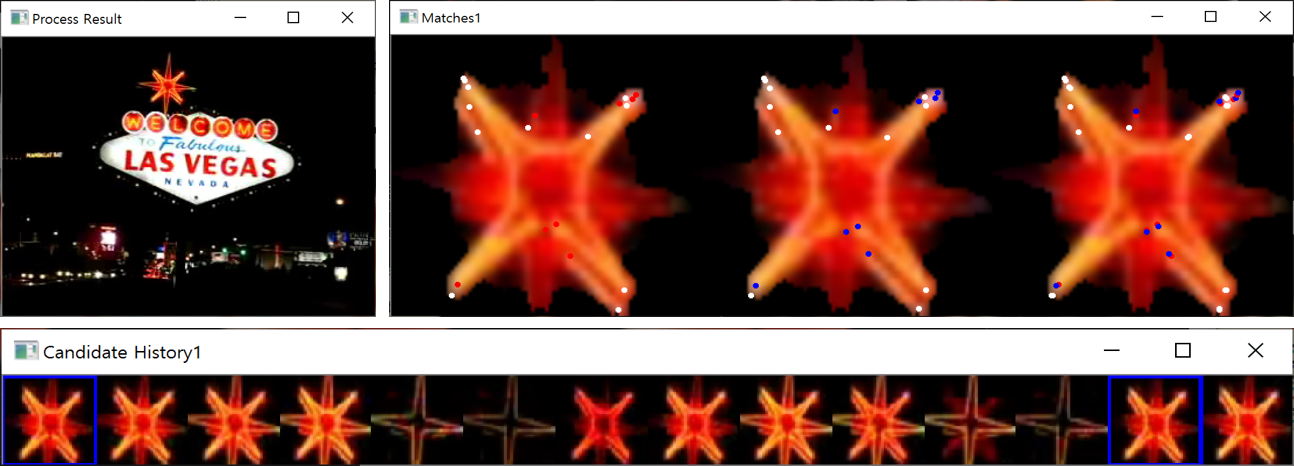

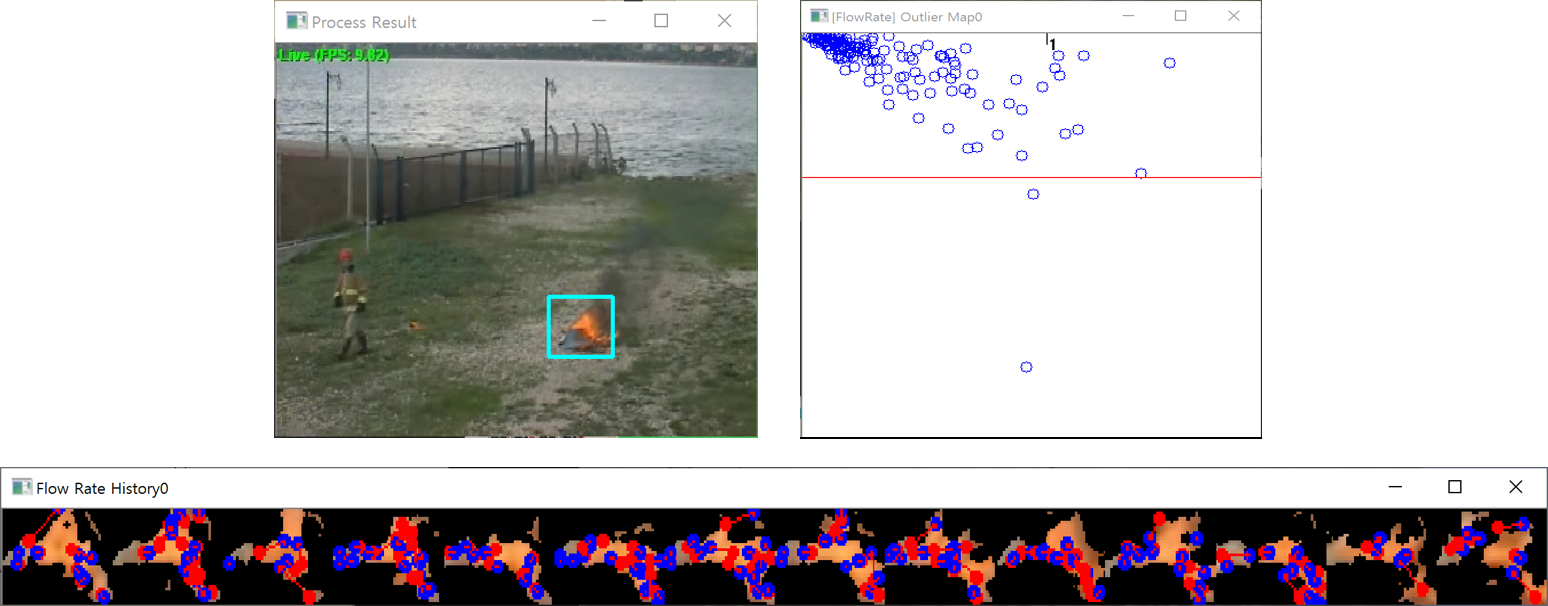

4. Flow Rate Based Detection

The most intuitive way to detect a fire is to verify how active the cadidiate region is. If a feature in the region is arbitrary moving, it is likely that the region includes a fire. This algorithm is a little bit similar to the covariance based algorithm, but it checks only the activeness of the flow. Once this information is collected during some period, PCA can determine numerically whether the region includes a fire. Meanwhile, there might be some noise, so the outlier has to be removed. The top-right image represents the result of PCA of the cyan-colored region which is in the top-left image. The red line is the separator to remove the outlier which means that the points above this line are only valid.

References

[1] Qureshi, W.S., Ekpanyapong, M., Dailey, M.N., et al: QuickBlaze: early fire detection using a combined video processing approach, Fire Technol., 2016, 55 pp. 1293–1317

[2] FIRESENSE database of videos for flame and smoke detection

[3] J. Choi and J. Y. Choi, Patch-based fire detection with online outlier learning, 2015 12th IEEE International Conference on Advanced Video and Signal Based Surveillance (AVSS), Karlsruhe, 2015, pp. 1-6

[4] Color Constancy Algorithms

[5] Hakan Habiboğlu, Yusuf & Günay, Osman & Cetin, A. (2011). Covariance matrix-based fire and flame detection method in video. Machine Vision and Applications. 23. 1-11. 10.1007/s00138-011-0369-1